|

This famous Buddhist quote is one of my personal favorites and one that Bruce Lee also used in one of his movies. Although it may seem more relevant to some Eastern philosophy or martial arts, it actually has a lot of relevance in data science too. Through this blog, my books, and my videos, I’ve put forward some ideas and hopefully some useful knowledge for anyone interested in data science and A.I. However, it’s easy to mistake conviction with cult-like hegemony, something I’ve observed in social media a lot. Whenever someone competent enough to have a good professional role and some prestige comes about, many people choose to become his or her followers, treating that person as a guru of sorts. This, in my view, is one of the most toxic things someone can do and it’s best to avoid at all costs. That’s not to say that all those people who have followers are bad, far from it! However, the act of blindly following someone just because of their status and/or their conviction is dangerous. You may get lots of information this way, but you will lose the most important thing in your quest: initiative. Of course, some of these people are happy to have a following and couldn’t care less about your loss of initiative. After all, they often measure their value in terms of how many followers they have, how many downloads their free book has, and how many likes they receive. This in and of itself should raise some serious red flags because no matter how much data science or A.I. know-how these individuals have, the path they are on doesn’t go anywhere good. I’m a firm believer in free will and I value it more than anything else, especially in the domain of science. As data science (and A.I.) are part of this domain, it’s imperative to show respect to this quality, even at the expense of a large following. That’s why whenever I share something with you, be it some data science methodology, some A.I. system, some heuristic, or some ideas about our field, I expect you to experiment with it and draw your own conclusions. Don’t take my word for it, because even though I make an effort to verify everything I write about, some inaccuracies are inevitable. After all, data science and A.I. are not an exact science! Naturally, it takes more than experimentation to learn data science and A.I., but with some guidance, some contemplation, some skepticism, and some experimentation, it is quite doable to learn and eventually master this craft. That has been my experience both for my own journey in data science and A.I., as well as in the journeys of my mentees. Hopefully, your experience will be equally rewarding and educational...

0 Comments

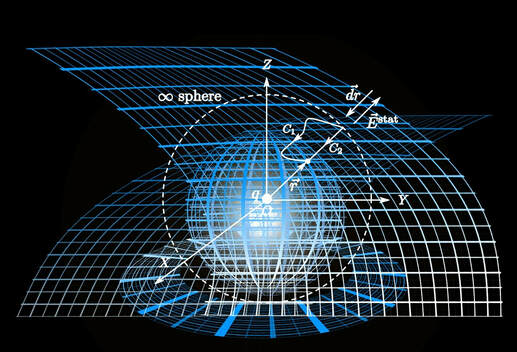

Sounds like a bold statement, doesn’t it? Well, regardless of how it sounds, this is a project I’ve been working on for a long time and which I’ve been refining for the past couple of weeks, while also doing some additional testing. So, this is not some half-baked idea like many of the things that tech evangelists write about to promote this or the other agenda. This is the kind of stuff I’d publish a paper about if I still cared about publications. In a nutshell, the diversity heuristic is a simple metric for measuring how diverse the points of a dataset are. This is quite different to spread metrics (e.g. standard deviation), since the latter focuses on the spread of a distribution, while it can take any positive value. Diversity, on the other hand, takes place between 0 and 1, inclusive. So, if all the vast majority of the data points are crammed into a single or a couple of places, the diversity is 0, while if the data points are more or less evenly distributed in the data space, the diversity is 1. Interestingly, even a random set of points has a diversity score that’s less than 1, since perfect uniformity is super rare unless you are using a really good random number generator! Also, this diversity metric is pretty fast because, well, if a heuristic is to be useful, it has to scale well. So, I designed it to be quite fast to compute, even for a multiple-dimensional dataset. Because of this, it can be used several times without the computer overheating. As a result, it is fairly easy and computationally cheap to have a diversity-based sampling process, i.e. a sampling method that aims to optimize the yielded sample in terms of diversity. Naturally, a diverse sample is bound to cram more of the original dataset’s signal in it, though some information loss is inevitable. Nevertheless, the diverse sample, which usually has higher diversity than the original dataset, can be used as a proxy of the original dataset for a dimensionality reduction process, such as PCA. Interestingly, the meta-features that stem from the sample are not exactly the same as those of the original dataset, but they good enough, in terms of predictive power. So, by taking the rotation matrix of the PCA model of the sample, we can use it to reduce the original dataset, making dimensionality reduction a piece of cake. So, there you have it: diversity can be used to reduce a dataset not just in terms of the number of data points it has (sampling) but also in terms of its dimensions. I know this may sound very simple as a process, but considering the computational cost of the alternative (not using diversity-based sampling), I believe it’s a step forward. Naturally, this is just one application of this new heuristic, which can perhaps help in other aspects of data science. Anyway, I’d love to write more about this but I’m saving it for a video I plan to do on this topic. Currently, I’m still busy with the new book so, stay tuned... With so many ways to get a book out there, even in a fairly challenging subject such as data science, you may wonder what this process entails and what is the best way to go about it. After all, these days it’s easier than ever to reach an audience online and promote your work, all while branding yourself as a professional in the field. Writing a book in data science is first and foremost an education initiative, targeting a particular audience. Usually, this is data science learners though it may be other professionals involved in data science, such as managers, developers, etc. A data science book generally tries to explain what data science can do, what its various methodologies are, and how all of that can be useful for solving particular problems (emphasis on the last part!). If you see a book that focuses a lot of the methods, particularly those of a particular methodology, it may be too specialized to be of most audiences, unless you are targeting that particular niche that requires this specific know-how. A key thing to note when exploring the option of writing a book is a publisher. Even if you prefer to self-publish, your book must be able to compete with other books in this area and a publisher is usually the best way to figure that out. If a publisher is interested in your book, then it’s likely to be somewhat successful. Also, if you are new to book authoring, you may want to start with a publisher since there are a lot of things you’d never learn without one. Also, a book published through a publisher is bound to have more credibility and a larger life-span. Understandably, you may have explored the various deals publishers make with their authors and figured out that you’ll never make a lot of money by publishing books. Fair enough; you’ll probably never make a living by selling your words (although it is possible still). However, if your book is good, you’ll probably make enough money to justify the time you’ve put into this project. Also, remember that most publishing deals provide you with a passive income, even if the publisher wants you to promote your book to some extent. So, even though you won’t make a lot of cash, you’ll have a revenue stream for the duration of your book’s lifetime. With all the data science material available on the web these days, acquiring all the relevant information and compiling it into a book is a fairly straight-forward task. However, just because it is fairly feasible, it doesn’t mean that it’s what the readers need. Without someone to guide you through the whole process and give you honest feedback (that’s also useful feedback), it’s really hard to figure out what is necessary to put in the book, what should be included in an appendix, and what should be mentioned in a link. Your readers may or may not be able to provide you with this information, while if your main means of interacting with them is how many of them download your book or visit your website, you are just satisfying your ego! A publisher's honest feedback often hurts but that’s what gradually turns you into a real author, namely one who has some authority in his/her written works. Otherwise, you’ll be yet another writer, which is fine if you just want to talk about writing a book or how you have written a book that you have on Amazon, things that are bound to be forgotten quicker than you may think… Although when people think of math in data science, it’s usually Calculus, Linear Algebra, and Graph Theory that comes to mind, Geometry is also a very important aspect of our craft. After all, once we have formatted the data and turned into a numeric matrix (or a numeric data frame), it’s basically a bunch of points in an m-dimensional space. Of course, most people don’t linger at this stage to explore the data much since there are various tools that can do that for you. Some people just proceed to data modeling or dimensionality reduction, using PCA or some other method. However, oftentimes we need to look at the data and explore it, something that is done with Clustering to some extent. The now trending methodology of Data Visualization is very relevant here and if you think about it, it is based on Geometry. Geometry does more than just help us visualize the data though. Many data models use geometry to make sense of the data, for example, particularly those models based on distances. I talked about distances recently, but it’s hard to do the topic justice in a blog post, especially without the context that geometry offers. Perhaps geometry seems old-fashioned to those people used to fancy methods that other areas of math offer. However, it is through geometry that revolutionary ideas in science took root (e.g. Theory of Relativity) while cutting edge research in Quantum Physics is also using geometry as a way to understand those other dimensions and how the various fundamental particles of our world relate to each other. In data science, geometry may not be in the limelight, unless you are doing research in the field. However, understanding it can help you gain a better appreciation of the data science work and the possibilities that exist in the field. After all, a serious mistake someone can make when delving into data science is to think that the theory in a course curriculum or some book is all there is to it. When you reduce data science to a set of methods and algorithms you are basically limiting the potential of it and how you can use the field as a data scientist. If however, you maintain a sense of mystery, such as that which geometry can offer, you are bound to have a healthier relationship with the craft and a channel for new ideas. After all, data science is still in its infancy as a field while the best data science methods are yet to come... |

Zacharias Voulgaris, PhDPassionate data scientist with a foxy approach to technology, particularly related to A.I. Archives

April 2024

Categories

All

|

RSS Feed

RSS Feed