|

Just today, I received this badge from one of the platforms I mentor. This is a place where I normally volunteer some mentoring, mostly for people who can't afford to pay for it. Should you wish to explore mentoring with me, feel free to check the mentoring section of this site. Cheers!

0 Comments

What Is Polywork? Polywork is a networking platform for professionals who are engaged in different activities. These can be anything from a podcast to a side gig to even a startup venture. Its key differentiator was the opportunity feature, which enabled its users to post potential collaboration scenarios for others to see and engage with alongside the usual social announcement feed. However, lately, the platform has been reduced to a simple resume site, similar to about.me, coupled with a messenger feature. Despite its questionable move, Polywork may have some potential as it's still a young company. To explore this potential, what better place to look than its data and its business impact? Data Involved in that platform The data involved in Polywork is many-fold. For starters, there is the data each user contributes to their profile. Once deployed, this data is fairly static, but a very solid basis for various events. After all, the users of Polywork are there to interact with each other (at least in principle), so there is a strong incentive to consume this data and act on it. This triggers a variety of interactions that generate even more data. From the time stamps to the interest signals (aka "boosts") to all the text in the comments. Additionally, there are direct messages that can also be a data source, even if they are probably not read by the Polywork team, for privacy reasons. In any case, the platform may still record the who, when, and how, for each one of these messages. On top of that, if a user follows someone else, there is an additional signal that may gradually build a graph depicting the connections and other relationships among the users. Beyond all this, there is also data related to when a user logs in, what device they use, how long they stay, what external links are clicked, what sites these links point to, etc. In other words, just from the passive activities of a user, there is a large variety of data to be collected, for all users, even the less vocal ones. Naturally, someone inside Polywork is bound to have access to additional data that is collected around the users, so the datasets available are bound to be richer. But how is this data useful to Polywork? That’s a question every data professional looking at this is bound to ask. Potential Data Products Several data products can leverage this sort of data. By the term data products, we mean any kind of application or dashboard that provides useful insights or some kind of functionality that drives engagement and adds value to the end user. For platforms like Polywork that live off engagement, such products are not just nice-to-haves but an essential part of the user experience. Other similar platforms have thrived through the use of such products which help keep these platforms fresh and interesting. Some examples of such data products are the following:

Naturally, all this is just scraping the surface of what’s possible. However, before a data product is developed it needs to be tied to a particular desired outcome from the business standpoint. Otherwise, it is just an intellectual exercise that may look good but not justify the resources it needs to come about. That’s why the business aspect of data needs to be taken into consideration, both before and after they are developed, as we’ll look at in a moment. Business Impact of Those Products The business impact of data products can be noteworthy, enough so to justify building and maintaining them. In this case, there is little doubt that at least some of the aforementioned data products can add business value. How much exactly would depend on how each data product is implemented, how it is used in the platform, and how it ties to the other features of Polywork. Let’s look at the data products one by one and how they can add value to the Polywork business.

Final Words All in all, there is a lot of potential in Polywork and the data it accumulates through its features (or at least did so until recently). Naturally, this company is just an example of how data can be leveraged to improve the business and make the product more engaging. Materializing this potential may not be a simple task, but it’s something feasible and worth exploring. The only prerequisite in this endeavor is a solid understanding of data work and the ability to reason around it, aka data literacy. Feel free to contact us for more information on this subject. Lately, we've put together a new survey to better understand this area and the main pain points associated with it. It would be great if you could contribute through a response and perhaps through sharing this survey with friends and colleagues. Cheers! https://s.surveyplanet.com/arw5bz4a Lately, I've started putting together a new course on problem-solving, targeted mainly at business people and team leaders (though any knowledge worker could benefit from it). I'm launching it in the next month or so. If you or anyone else you know is interested, you can fill in this short survey for it and join the waitlist. Cheers! This is the second part of the Data Literacy for Professionals article

Examples of Data Literacy in Practice Data literacy adds value to an organization in various ways, one of which is to support decision-making. Say you need to create a new marketing campaign to increase customer retention, for example. Where would you start? Would you just come up with a few random ideas, select the most promising ones, and run with it? A data literate professional would take a step back, examine the data at hand, see if there was a way to collect additional data, if necessary, and then work with some analyst to make sense of it all. Then, upon contemplating the insights gathered from this analysis, he would find some practical alternatives and discuss them with the marketing team (which might be even his immediate subordinates). Afterward, he might want to dig deeper into a specific option or two and after examining the corresponding data and insights further, come up with a data-backed decision to present to the marketing executives. Another example of how data literacy would add value to decision-making could be UI enhancement, a vital part of the UX. For example, say that the site doesn't get many people to click on the "buy" button. Someone identifies this problem by observing how the sales funnel is and brings it to your attention. You then explore various alternatives for making the "buy" button easier to stand out, perhaps experimenting with its color, size, etc. Which option would you go with, however? If you are data literate, you get the help of a data scientist to design an experiment, run it, and come up with some insights as to which option yields a better click-through rate. This analysis can involve some statistical tests too. You'd then think about all this, evaluate its reliability, and decide on the most promising option for the button based on the results of this analysis. This mini-project can help improve the UI and possibly the whole UX, driving more sales for the company. New products and services can also come about through data literacy. For instance, you could have a new analytics service for the users made available either on the website (if each user has an account there) or via an API. Perhaps even something geared towards a more personalized interface, based on the user's behavior on the website, an interface that can even change dynamically. All these may seem like magic to someone unaware of the potential of data but for a data-literate professional, especially someone with a bit of imagination, they are as commonplace as a coffee shop. In the competitive world we live in, getting an edge is paramount and something that data literacy can enable. Data literacy can help solve problems faster and more reliably, come up with new viable products/services based on data, and even bring about a more efficient and transparent culture in the organization, one based on a data-driven mindset. Additionally, data literacy can help make the most of the specialists involved in data work, improve employee retention (for these and other information workers), and transform the organization into a place that keeps up with the latest tech developments. An organization like this can foster more contentment and efficiency in its employees and enable them to commit long-term. A place like this can help individual professionals in many ways while at the same time benefiting other people involved, such as users and customers. In times like these, when the economy and the market are unstable, such improvements can bring about stability and even growth on both an individual and a collective level. Final thoughts Data literacy isn't a nice-to-have, not anymore anyway, but a necessity for any organization that wants to keep its place in today's increasingly digital world. A company comprising data-literate people, especially managers and other knowledge workers, can leverage resources it didn't know it had because they are in the form of data. Also, it can make it more agile as it can explore acquiring additional data resources and using them to improve existing data-related processes and services further. This company's competitors are probably doing this already, so it's no longer a luxury to invest in data literacy and use it to cultivate a data culture and make data-driven decisions. Data literacy also helps individual professionals through a series of specific improvements. For example, a mid-level manager can become more data literate and have a more modern approach to tackling the problems her team faces. Additionally, she can hire new members or train existing ones so that they can become more competent in handling and analyzing data to tackle the tasks in their workflows. Maybe even discover new ways to deal with bottlenecks and other issues that hinder efficiency. All that progress might eventually manifest as a promotion for that person and other people on her team. And if that person decides to leave that organization, she can have more options in future work placements and even new roles, thanks to her data literacy ability. Overall, data literacy is a powerful tool, or mindset and skill-set to be more accurate, for leveraging data and making better decisions accordingly. It is very hands-on and benefits people and organizations in various ways. It involves understanding data and how we govern it, analyzing it effectively, presenting and communicating the insights discovered, and protecting data/information, among other things. It entails understanding where privacy fits and taking actions to keep data related to people's identities under wraps. Data literacy is closely linked to data strategy and having a data culture in the organization. Being literate about data can also give professionals a competitive advantage over their data-illiterate colleagues and make a company more agile and relevant in the market. Especially when it comes to complex problems and difficult decisions, it can help bring about more reliability and objectivity to the whole matter. This leveling up can enhance the organization overall, along with everyone associated with it, be it partners, vendors, and, most importantly, its customers and users. To learn more about data literacy, feel free to explore the corresponding page of this site. Cheers! Introduction

Different people mean different things when they talk about data literacy. For this article, so that we are on the same page, let's use the definition of "the ability to create, manage, read, work with, and analyze data to ensure & maximize the data's accuracy, trust and value to the organization" (D. Marco, Ph.D.). Note that this definition highlights a crucial characteristic of data literacy which entails coherency and collaboration within the organization, something that often reflects a particular kind of culture. I'm referring to the data culture, which is an integral part of data strategy, merging the business objectives and plans with the data world where data becomes a kind of asset. All that's great, but it may seem a bit abstract. However, data literacy is very hands-on, even if it's not as low-level work as analytics. It is also utterly significant for all sorts of professionals, particularly decision-makers. Many people talk about making data-driven decisions and having a data-driven approach to problem-solving. How many of them do it, though, and to what extent? Well, data literacy enables professionals to do just that and make data something they value and leverage for the benefit of the whole. Data literacy is beneficial for other people too. For instance, when someone works in a data-literate organization, there tends to be more transparency about how decisions are made and what different pieces of data mean. So, if you have a role that involves data in some shape or form, you can be sure that it's not a black box and that you can learn from it. Naturally, this implies that you are data literate to some extent too! Data literacy is a state of mind, a way of thinking and acting about data. As such, it has many benefits that depend on the organization and the data available to it. The fact that many companies base their entire business model on data attests to the fact that data is crucial as an asset. To unlock its value, however, you need data literacy. Key Ideas and Concepts of Data Literacy Let's delve deeper into this by looking at the components of data literacy. For starters, data literacy involves understanding data and how it is governed. This part of data literacy is vital since many organizations have lots of data that is essentially useless because it's in silos and inaccessible to those who need it. This problem is essentially a data governance one. Also, as much of it involves personally identifiable information (PII), it has to abide by specific regulations such as GDPR. Otherwise, this data may be a huge liability. Data literacy also involves analytics, as it is when data is turned into information that it truly becomes useful. The latter we can understand better and reason with, especially in decision-making. Data in its original form is usually understandable only by computers. Analytics makes this transformation and enables others to benefit from the data. Usually, analytics work is handled by specialists, such as data scientists. Data literacy also involves presenting and communicating data. This part of data literacy often entails reasoning about insights and exploring how they can apply to an organization's challenges. Otherwise, data has just ornamental value, which may not be enough to justify people working with it. Perhaps that's why today every data professional is assessed based on communication skills too, not just technical ones. Finally, data literacy involves protecting the data and whatever information it spawned. It's usually specialized cybersecurity processes that ensure the protection aspect of data literacy, which also includes preserving the privacy of the people behind that data. In larger organizations, there may even be specialized professionals involved in this kind of work. What does a data-literate professional look like, though? For starters, it's not like he stands out from the crowd. But when that person engages in a conversation on a business topic, it becomes clear that they know how data can be used as an evergreen asset. Such a person may also undertake responsibilities related to the use of data in decision-making, be it through a data-driven marketing initiative, a cohort analysis of the customers or users, etc. A data-literate professional can undertake numerous roles, not just those related to hands-on data work. He can be a competent team leader, a business liaison, a consultant, and even an educator, promoting a data culture in the org. As long as that person has a solid understanding of data and how the organization can put the data available into good use, that person is a data literate professional and can add value through that. Generally, data-literate professionals are very competent in leveraging data for making decisions and driving value in the org. This aspect of data literacy often involves having a sense of data and its potential. For someone else, data may be just something abstract and interesting to data professionals only. For the data-literate professional, however, it's something as powerful as a product sometimes. At the same time, it's a pleasant challenge because just like products need work before you can trade them for money, data also requires special treatment. A data-literate professional accepts this challenge and works towards making it a reality. This special treatment may involve getting the right people in a team or leveraging the existing ones, doing some mentoring even, and turning this understanding of data into a set of processes that transform data into something of value. When it comes to data literacy, there are several challenges most professionals change. I say most because people with a data background tend to find this whole matter intuitive and relatively easy. However, people who come from different backgrounds tend to struggle with data literacy in various ways. After all, traditional education stems from a time when data wasn't something educators knew or cared about. Their data literacy skills were rudimentary at best, while they focused on educating people about those business models and concepts that were more relevant back then. That's not to say that business acumen isn't that important. It's more important, however, when it's integrated with data acumen (as Bernard Marr eloquently illustrates in his book and courses on data strategy). Data literacy is a journey for most professionals, and there are different levels to it. Maintaining a sense of humility about this matter and understanding there is always more to learn can go a long way. This isn't an easy task, especially for accomplished professionals who got far in their careers using traditional ways of thinking about assets and business processes. Perhaps through proper coaching, mentoring, and other educational tools, they can overcome the challenges that plague this journey toward complete data literacy. Data literacy is crucial in today's digital economy. As data is what some refer to as the new oil, the prima materia of many products, data literacy is the equivalent of an oil-based engine. The main difference is that it doesn't pollute and there are no practical limitations on the fuel! Nevertheless, it's not trivial as some data people make it out to be. Of course, you can plug this data into some off-the-shelf model and get it to spit out some results that you can put into some slick presentation and share with the stakeholders. However, this is often not enough or relevant. Data literacy helps people see how the data relates to the business objectives, tackling specific problems and answering particular questions. Having some fancy data model may be something interesting to boast about, but if it doesn't help the organization with its pain points, it seems like an ornament rather than something of value. Going back to our metaphor, it's more like a gadget than an engine that can help us traverse the distance between where we are as an organization and where we need to be. To be continued... In the meantime, feel free to learn more about data literacy in the corresponding page of this site. At the end of last month, OpenAI released its latest product, which made (and still makes) waves in the AI arena. Namely, about three weeks ago, ChatGPT made its debut, and before long, it gathered enough traction to outshine its predecessor, GPT3. Since then, many people have started speculating on it and making interesting claims about its capabilities, role in society, business value, and future. But what about the human aspects of this technology? How can ChatGPT affect us as human beings and professionals in the next few years?

Let's start with the latter, as it's generally easier to understand. Whether you identify as a data professional (particularly one in Analytics), a Cybersecurity expert, or just someone interested in these fields, ChatGPT will affect you. As its amoral, it may not understand how any given actions are bound to have consequences on other people, so if you become obsolete because of its work in these areas, it's hard to blame it. After all, it was just trying to be helpful! And all those people who are becoming gradually more addicted to free services, free advice, and anything that doesn't part them from their cash may be bound to feel an attraction to this technology. It's not just translators and digital artists that have real problems with this software, through the rapid increase of the supply and the lowering of the prices of their services as a consequence. If someone could get insights or cybersecurity advice from ChatGPT, it's doubtful they'd consider paying you for the same services plus the additional burden of dealing with a human being. After all, we are all flawed in some way that may trigger others, while the AI system may seem relatively perfect. As resources are becoming more scarce by the year, it's not far-fetched to expect technologies like this one to get a larger share of the market in analytics and cybersecurity services soon. How many people do you know who can tell the difference between some good advice and some not-so-good one when the latter is phrased in flawless English and in a personalized way? And how many people have the maturity to appreciate and opt for the former, just out of principle? As for the effects of ChatGPT on us as human beings, these are also not too promising either. If someone is used to getting whatever they want without paying anything, it's only natural that this person would become spoiled, a taker of sorts, with a growing appetite for more free services. I don't know about you, but I find that I am better off being surrounded by people who are givers or at least matchers instead of takers (I've experienced plenty of the latter in my life, especially as a student). Now, this psychological corrosion may not happen tomorrow or even next year, plus it's unfair to assign all the blame to ChatGPT since other similar technologies do the same. However, what ChatGPT does that no other software has managed before is democratize this free stuff (at least to its users) and give the illusion of knowing everything well enough. In other words, a ChatGPT user may feel that other people are unnecessary and that this software is sufficient for all their knowledge needs. This subjective matter may be untrue, but good luck convincing that person that this is not a good option for them or the world overall! Technologically, ChatGPT may be a brilliant product and one that can open new avenues of research toward a more futuristic society. However, without preserving the human aspects of the world, all this technology is bound to backfire and do more harm than good. Not because suddenly the AI may decide to dominate us, but because we as a species may not be ready for this tech and the rapid changes it can (and probably will) incur. To make things worse, the cost of this tech, although not factored in by the futurists and the evangelists of OpenAI, is still there. It seems paradoxical to me that we try to conserve energy, even in the winter months, because of the rising cost of gas and electricity, even though we allow such an energy-hungry tech to consume large amounts of energy (computational power isn't cheap!). As I don't wish to end this article on a sad note, I'd like to invite you to ponder solutions to this moral problem (something that I sincerely doubt this or any other AI is particularly good at). For additional inspiration, I'd recommend the book The Retro Future by John Michael Greer, which covers this complex topic of progress and technology much better than I can. Maybe ChatGPT is an opportunity for us all to view things from a different (hopefully more holistic) perspective and develop some Natural Intelligence to complement the Artificial Intelligence out there. Cheers. Don't let the somewhat philosophical title mislead you! This isn't an article for the abstract aspects of the world, but something pointing to a very real problem in our thinking and how it makes experiencing life difficult. Namely, an age-old issue with our logic, reasoning, and how as a data modeling tool, it fails to capture the essence of the stuff it aims to understand. In other words, it's a data problem that influences how we process information and understand life in its various aspects. In more practical terms, it is the loss of information through predefined categories that may or may not have relevance to life itself.

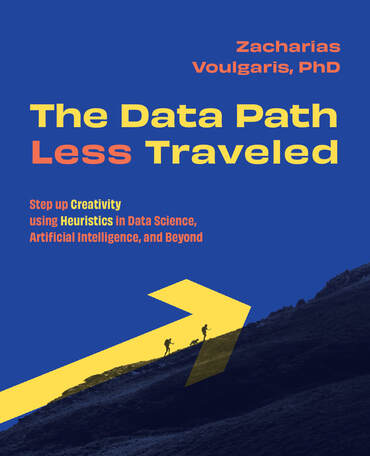

The problem starts with how we reason logically. In conventional logic, there are two states, true or false. It's the simplest possible way of thinking about something, assuming it's simple enough to break it down into binary components. If we look at a particular plant, for example, we can say that it's either alive or dead. Fair enough. Of course, this approach may not scale well for a group of such plants (e.g., a forest). Can a forest's state be reduced to this all-or-nothing categorization? If so, what do we sacrifice in the process? Things get more complicated once we introduce additional categories (or classes in Data Science). We can say that a particular plant is either a sprouting seed (still well within the ground), a newly blossomed plant (above the ground too, but just barely), a mature plant (possibly yielding seeds of its own), or a piece of wood that's lying there lifeless. These four categories may describe the state of a plant in more detail and provide better insight regarding how the plant is fairing. But whether these categories have any real meaning is debatable. Most likely, unless you are a botanist, you wouldn't care much about this classification as you may come up with your own that is better suited for the plant you have in mind. Naturally, the same issue with scalability exists with this classification also, as it's not as straightforward as it seems. A forest may exist in various states at the same time. How would you aggregate the states of its members? Is that even possible? In analytics, we tend to avoid such problems as we often deal with continuous variables. These variables can be aggregated very easily and we can teach a computer to reason with them very efficiently, perhaps more efficiently than we do. So, a well-trained computer model can make inferences about the plants we are dealing with based on the continuous variables we use to describe those plants. Practically, that gives rise to various sophisticated models that appear to exhibit a kind of intelligence different from our conventional intelligence. This is what we refer to as AI, and it's all the rage lately. So, where does conventional (human) intelligence end and AI begin? Or, to generalize, where do any categories end and others begin? Can we even answer such a question when we are dealing with qualitative matters? Perhaps that's why we have this inherent need to quantify everything, especially for stuff we can measure. But how does this measurement affect our thinking? It's doubtful that many people stop to ask questions like that. The reason is simple: it's very challenging to answer them in a way agreeable to many people. This lack of consensus is what gave rise to heuristics of all sorts over the years. We cannot reason with complexity and sometimes we just need to have a crisp answer. Heuristics provide that for us, empowering us in the process. These shortcuts are super popular in data science too, even if few people acknowledge the fact. There is something uncomfortable with accepting uncertainty, especially the kind that's impossible to tackle definitively. Some things in nature, however, aren't black and white or conform to whatever taxonomy we have designed for them. They just are, giddily existing in some spectrum that we may choose to ignore as it's easier to view them in terms of categories. Categories simplify things and give us comfort, much like when we organize our notes in some predefined sections in a notebook (physical or digital) for easier referencing. The problem with categories arises when we think of them as something real and perhaps more important than the phenomena they aim to model. John Michael Greer makes this argument very powerfully in his book "The Retro Future," where he criticizes various things we have taken for granted due to the nature of our technology-oriented culture. But why are categories such a big problem, practically? Well, categories are by definition simplifications of something, which would otherwise be expressed as a continuum, a spectrum of values. So, by applying this categorization to it, we lose information and make the transition to the original phenomenon next to impossible. Additionally, categories are closely related to the binary classification of things since every categorical variable can be broken down into a series of binary variables. In the previous plant example, we can say that a plant is or isn't a sprouting seed, is or isn't a newly blossomed plant, etc. The cool thing about this, which many data scientists leverage, is that this transition doesn't involve any information loss. Also, if you have enough binary variables about something, you can recreate the categorical variable they derived from originally. All this mental work (which to a large extent is automated nowadays) makes for a very artificial worldview where everything seems to exist in a series of ones and zeros, trues and falses, veering away from the complexity of the real world. So, it's not that the world itself is very limited, but rather our logic and as a result our perception and our mental models of it that is limited. As a well-known data professional famously said, "all models are wrong, but some are useful" (George Box). My question is: "how much accuracy are we willing to sacrifice to make something useful from the information at hand?" If you find that heuristics are a worthwhile option for dealing with the complexity of life effectively, you'd be intrigued by how far they can go in data science and AI. There are plenty of powerful heuristics which can simplify the problems we are dealing with without information loss, all while bringing about interesting insights and new ways of applying creativity. My latest book, The Data Path Less Traveled, is a gentle introduction to this topic and is accompanied by lots of code to keep things down-to-earth and practical. Check it out! Due to the success of the Analytics and Privacy podcast in the previous few months, when the first season aired, I decided to renew it for another season. So, this September, I launched the second season of the podcast. So far, I have three interviews planned, two of which have already been recorded. Also, there are a few solo episodes too, where I talk about various topics, such as Passwords, AI as a privacy threat, and more. Since I joined Polywork, many people have connected with me on various podcast-related collaborations, some of which are related to this podcast. So, expect to see more interview episodes in the months to come.

There is no official sponsor for this season of the podcast yet, so if you have a company or organization that you wish to promote through an ad in the various episodes (it doesn’t have to be all the remaining episodes!), feel free to contact me. Cheers! My latest book, The Data Path Less Traveled, published by Technics Publications, is going to make its official debut this Friday, September 9. If you are up for it, you can participate in a short presentation for it, along with Q&A, to get acquainted with the topic (and me). This online event is at 10 am EST (7 am PST, 4 pm CET) and you can register for it here. It's entirely free and you don't need to have any experience or expertise on this topic to follow it. I hope to see you there!

|

Zacharias Voulgaris, PhDPassionate data scientist with a foxy approach to technology, particularly related to A.I. Archives

April 2024

Categories

All

|

RSS Feed

RSS Feed