|

Open-source software is any piece of software that's open to review and edits/forks. In most cases, it's also free and under the GNU license or something equivalent, though when people refer to it as free, they often use the term as a proxy to freedom. As a result, most people refer to open-source software today as FOSS, which stands for Free and Open-Source Software. FOSS is also a movement of sorts that's taken hold since the earlier days of computing with people like Richard Stallman, who spearheaded the GNU initiative and has been very active in promoting FOSS throughout his life. With the advent of FOSS programming languages and FOSS operating systems (such as GNU/Linux and FreeBSD), this movement grew and is now quite established across various fields that involve programming. As you can imagine, FOSS is also quite relevant in data science and A.I., at least lately. Most data scientists and A.I. professionals today tend to use an open-source language (many of them using Python, while the more adventurous dabble with Julia, Scala, and lately even Rust), handle open-source dataset (such as those made freely available at the UCI Machine Learning repository, among many other sites), and work with open-source frameworks (such as Scikit-learn, MXNet, and Flow). It's doubtful that many people get into data science with any monetary investment in the tools or the datasets they need since it's a far better investment to spend money on educational resources such as books and videos marketed by a technical publisher. Interestingly, these resources have more in common with FOSS than all that mediocre stuff you find on YouTube these days, labeled as educational for some reason. FOSS in data science (and A.I. to a great extent) is largely responsible for the immense growth of this field. While back in the old days when I was doing my Ph.D. the best way to get into analytics, particularly machine learning, was through platforms like Matlab that come with a relatively high price tag, nowadays you can start your data science journey without spending any money on the software you use. This way, you can develop some skills and try out the field before deciding to stick with it. Since there are more reasons to commit to data science than not to, the easy point of entry made data science popular, while the trend is also bound to continue. Nevertheless, it's important to note some exceptions to the FOSS paradigm, which are also relevant in data science. First of all, there is Mathematica, which is probably one of the best closed-source platforms out there, not just for data science but for any field that involves numeric data. Contrary to what its name suggests, Mathematica is a broad kind of platform having its own programming language built-in; it's not just about Math. Also, its latest version feature A.I. tools, while the person behind this piece of software is a genius scientist who also came up with a novel model for describing the universe. Apart from Mathematica, there is also Matlab, which is still used by made learners of the craft, particularly in academia. Lately, however, its popularity has started to decline, partly because of its open-source clone, Octave, and partly because it pales when compared with modern data science and A.I. platforms that feature better performance and larger communities of users. All in all, FOSS is paramount in data science work, partly due to the relevance of programming in this field. While new FOSS players come to our field (the most notable of which is Rust, which I covered briefly in the previous article on this blog), chances are that some of them are bound to stay. Things like the Jupyter notebook, for example, aren't going to disappear, even if other code notebooks have entered the scene lately, especially when it comes to the Julia language. In any case, if you want to learn more about the various (mostly open-source) software that populates our fascinating field, you can check out my book Data Science Mindset, Methodologies, and Misconceptions. As a bonus, you can also learn about other aspects of the data science field, such as the marvelous methodologies it features, without getting all too mathy about it! It's been a few years since I authored it, but so far, it's aged quite well, just like most FOSS out there we use in data science and A.I. work. Cheers!

0 Comments

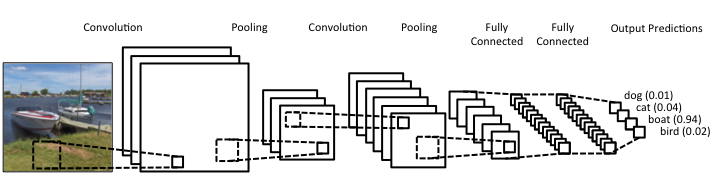

What Rust is I may have mentioned Rust in the past, but now I’d like to talk more about it and its role in data science and A.I., as it has passed the test of time, in my view. After having delved into Rust programming a bit, enough to understand that it's much more challenging than I realized at first, I believe I can now write about it with confidence. Also, since it's not so new to me, I'm way past the infatuation stage that characterizes most people who have talked or written about it, usually shortly after they started exploring it. So, Rust is a high-performance language, currently in version 1.51, and with a large enough community of users (and companies) to make a dent in the programming realm. There is even a Rust track in the Exercism platform, where there are dedicated mentors who can help you learn it through the carefully designed and curated programming drills on the Exercism website. What's more, there are a few interesting books on Rust, while there are also conferences and workshops for anyone serious about this language. Rust’s key strengths Rust isn't popular because of its particular name or its cool logo, though. Rust earned its popularity through the strengths it brings to the table and the value-adds that accompany its deployment. First of all, it's high-performance, meaning that you can use it instead of C, C++, or even Java. That's not an easy-to-accomplish thing, and few languages have accomplished that. Also, it offers this performance while maintaining a relatively high-level approach to programming, much like most modern languages that come about. Additionally, Rust is reliable and as safe as it gets. Many consider it to be better in that respect than even C, which has a series of memory management issues resulting in risky code. So, if you want to build a program that just works and won't make you sleep with your phone on at night (in case you'll need to fix an issue of a script you've shipped), Rust is a good option. Finally, Rust is geared towards productivity. It's not an academic language or something a bunch of hobbyists put together, far from that. Rust is built for devs and people who are dead serious about designing and deploying software. The language's well-written documentation adds to this. At the same time, its error messages, although frustrating at first, give you some actual insight as to what's wrong with your scripts (instead of some generic error message that's more of a puzzle than any real help for debugging your code). Rust and Data Science When it comes to data science work, particularly machine learning and AI-related tasks, Rust has the potential of being a great asset. I say this, even though I'm vested in another high-performance language, Julia, for which I've written extensively (my books on Julia) and continue to use up to this day. However, unlike those fanboys of this or the other data science language, I'm open to new possibilities, which I'm always eager to explore. So, even though I'm a long way from being a Rust veteran, I can see its merit in our field. So far, there are a few Rust packages for ML work, such as Smartcore and Linfa (plant juice in Italian), though, in all fairness, this codebase is nowhere near the variety and maturity of the likes of Scikit-learn in Python and the packages in the Julia ecosystem. Still, there is a lot of value Rust offers in this space, and as the community grows, we should be expecting to see the ML and A.I. libraries of Rust grow both in number and sophistication. Final thoughts It may seem a bit too early to tell, but it's not far-fetched to say that Rust is here to stay and make it. While high-level languages like Python had nothing more to offer than simplicity and ease-of-use (probably the main reason they made it to the data science world), Rust is closer to modern languages like Julia and Nim, which offer a serious performance boost. Its business proposition is unquestionable, its adoption higher than many people expected, and its potential of making a dent in machine learning is hard to contest. Once you get past its eccentric programming style, you may begin to view it with the respect and fondness it deserves. So, check it out when you have a moment. Cheers! Lately (and I use this term loosely), there's been a lot of talk about deep learning. It's hard to find an article about data science that doesn't mention Deep Learning in one way or another. Yet, despite all its publicity, Deep Learning is still conflated with machine learning by most of the people consuming this sort of article. This misrepresentation can lead to misunderstandings that can be costly in a business setting, as there can be a disconnect between the data science team and the project stakeholders. Let's look into this topic more closely and clarify it a bit. Machine Learning is a relatively broad field that has become an instrumental part of data science. Complementary to Statistics, Machine Learning incorporates a data-driven approach to analyzing data. This approach involves the use of heuristics and predictive models. Most models used by data scientists today tend to fall into this category. Things like Random Forests and Boosted Trees are commonplace and powerful, while they are classic examples of machine learning. But these aren't the only ones, and lately, they have started to give way to other, more powerful models. The latter is in deep learning territory. Deep Learning is part of AI and deals with machine learning problems. It's still an innate part of the AI field, but because of its applicability in Machine Learning, it is often considered to be part of the latter too. After all, AI has spread in various domains these days, and as predictive analytics is one domain where it can add lots of value, its presence there is considerable. In a nutshell, Deep Learning involves large artificial neural networks (ANNs) that are trained and deployed for tackling data science-related problems. There are several such networks, but they all share one key characteristic: they go deep into the data, through the development of thousands of features, in an automated manner, for understanding the intricacies of the data. This sophistication enables them to yield higher accuracy and harness even the weakest signals in the data they are given. Deep Learning has been quite popular lately, not just because of its innovative approach to analytics but primarily because of the value it adds to data science projects. In particular, deep learning systems are versatile and can be used across different domains, given sufficient data and enough diversity in that data. They aren't handy just for images, while newer areas of application are being discovered constantly. Additionally, deep learning systems can do without a lot of data engineering (e.g., feature engineering) since this is something they undertake themselves. In other words, they offer a shortcut of sorts for the data scientists who use them, making their projects more efficient. Finally, deep learning systems can be customized considerably, making them specialized for different domains. That's particularly useful for developing better models geared towards the specific data available to you. Of course, the whole topic of deep learning is much deeper than all this. What's more, despite its usefulness, it's not always appropriate since conventional machine learning is also quite relevant in data science today. Moreover, there are other AI-based systems usable in data science, such as those based on Fuzzy Logic. In any case, there is no one-size-fits-all solution, which is why it's better to be well-versed on the various options out there. A great place to start learning about these options in a hands-on way is my latest book, Julia for Machine Learning, where we tackle various data science problems using various machine learning methods. Check it out when you have a moment! More and more datasets these days contain sensitive data capable of identifying the people behind those ones and zeros. We usually refer to this kind of data as personally identifiable information or PII for short. PII is a privacy concern for every data scientist or analyst working with such a dataset since if it leaks, we're all in trouble! Not just the data scientist, but also the whole organization, especially if it's complying with privacy regulations like GDPR. Let's look into this matter in more detail. First of all, PII-related privacy is inevitable in most data science projects today in the real world. Chances are that at least some of the variables you deal with contain some type of sensitive data. These can be things like names, contact details, credit card numbers, and even health-related data (this latter kind of PII is particularly important since most of it cannot be changed, in contrast to a credit card). Even geo-location data is often under the PII umbrella though on its own it's not so sensitive because it's hard to match it to a particular individual without using some other variable too. This matching of particular variables to specific individuals is the source of all privacy-related problems. It's not so much the fact that some people's identities are compromised that's the issue (who cares if it becomes public that I enjoy a cup of coffee at the local coffee shop every morning?) but the fact that this data is supposedly protected. When it's out in the open, it's a breach of some privacy legislation, while the organization that handles this data is liable for a lawsuit. To make matters worse, if word gets out that a particular company doesn't protect its clients' sensitive data adequately, its reputation is bound to suffer, and its brand can be damaged. Not to mention that some of this PII can be traded in the black market, so if a malicious hacker gets hold of it, it can make things even more challenging to manage. To avoid these problems, we need to handle PII properly. You can do this in various ways, some of which we're going to explore in future articles. As I've lately delved more into Cybersecurity and Privacy, I can provide a better perspective on this subject, which can tie into data science work more practically. However, should you wish to delve into this topic a bit now, you can check out my latest video course on WintellectNow, titled Privacy Fundamentals. There I cover various practical ways about securing privacy in your personal and professional life. It's not data science-focused, but it can help you cultivate the right mindset that will enable you to handle PII more responsibly. Stay tuned for more material in the coming months. Cheers! |

Zacharias Voulgaris, PhDPassionate data scientist with a foxy approach to technology, particularly related to A.I. Archives

April 2024

Categories

All

|

RSS Feed

RSS Feed