|

As you may know already, one of the world's top data modeling conferences, Data Modeling Zone (DMZ), is taking place this November in Stuttgart. This is an international conference for all sorts of data professionals, not just data architects, plus it has an impressive bookstore. As I'll be participating in this conference as a speaker, I get to give away discounts and such. So, if you plan to register for the conference, you can use my last name (8 characters) to claim a 15% discount. With this kind of money, you can treat yourself to a tour to the lovely German countryside and still have some money left to buy a book or two from the aforementioned bookstore! The knowledge vs. faith conundrum has been a philosophical debate for eons, yet it usually is geared towards abstract matters, such as life after death. So, how does this apply to a pragmatic field such as data science? Well, contrary to what many people think, most data science practitioners often rely on faith to a great extent, when dealing with data science matters. But why is that? Unfortunately, most people learning the craft have a strict time table to keep, so they don't have a chance to go in depth on the material covered. This increasingly severe temporal limitation is also coupled with other factors, such as the plethora of "cookbooks" on the topic. Not to be confused with actual cookbooks, comprising of various recipes, oftentimes original tried and tested dishes developed by experienced chefs; these cookbooks are fine and probably have a bigger bang for your buck, compared to the technical cookbooks that are basically a bunch of methods and functions, usually in a popular programming language, organized by someone who oftentimes doesn't even understand them. If you rely mainly on such sources of knowledge, you are basically putting your faith in these people and creating gaps in your understanding of the craft. So, if you obtain technical knowledge quickly or from a source that doesn't go much in depth, it is unlikely to truly know data science. That's not to say that you shouldn't read books; far from it. Books are useful but no matter how good they are, the best way to learn something remains the empirical approach. Going under the hood of the methods involved, implementing methods from scratch and even experimenting with your own ideas, are all good ways to learn something in more depth and remember it for longer periods of time. Also, through empirical knowledge of the craft, you are more confident about what you know and oftentimes more aware of the boundaries of your knowledge. There is room for faith in our field, as for example when you trust what your data science lead/director tells you, when you accept advice from a mentor, and when you rely on the know-how of an academic paper written by someone who knows data science in-depth. However, it's good to balance it with empirical knowledge to the extent your time allows. Perhaps in abstract matters, it's hard to obtain empirical knowledge, but on things that you can test yourself, the only limitations are man-made ones. Are you willing to transcend them? Lately I created a video on Spark, its APIs, and how all this is useful as a data science tool. You can find it here though in order to watch it in its entirety, you need to have an account on O'Reilly (formerly known as Safari).

I understand that many of you came to this website today to check my weekly article on Data Science / A.I. and this announcement may be underwhelming. However, it is something that take precedence over any article, since my published work is more worthwhile to follow than the articles I write on this or any other blog. The former go through a certain editing process and are then published by a reputable 3rd party, while the latter are there just to offer a fresh perspective on various data science matters that I consider worth pondering about. Whatever the case, I am going to write an article this week, though it may be live later on, as I have other things going on these days. Thank you for your understanding! If you are like most technical people, delivering a presentation can be a stressful and challenging experience. Just the thought of having to talk in front of other people, particular those who have a say in your career development, can be daunting. However, with some planning and preparation, giving a presentation can be straight-forward and even enjoyable. Check out this video I've made recently to learn more about this topic and hopefully expand your view on what a data science presentation is all about! Note that Safari, or O'Reilly Learning as it's lately called, is a paid platform that requires a subscription in order to view the various material it contains in full. Yet, with a valid account (even a temporary one), you can view not only this but a variety of other videos, as well as a range of technical books, including some of my own. There are many mistakes that can be made in data science, many of which can go unnoticed for a while. The reason is that unlike coding bugs, these mistakes don't throw an error or an exception, making them harder to spot and fix, as a result. In my view, the biggest such mistake is that of thinking that one aspect of data science is so significantly better than the others that the latter don't matter much. I used to think like that back in PhD days (my thesis was on Machine Learning and heuristics) but fortunately, I discovered the error of my thinking and started broadening my perspective on this matter, something I continue to do as I learn more about this fascinating field. Let's look into this more closely. For starters, there are several frameworks or tool-kits available in data science today, ranging from Statistics to Machine Learning, and lately, A.I. based models. All of them have their own set of advantages as well as limitations. Many Machine Learning models, for example, particularly A.I. based ones (mainly ANNs) are very hard to interpret and are often referred to as black boxes. Stats models, on the other hand, may be easy to interpret, but they may not be as accurate, while they tend to have a number of assumptions which may not always hold true. That's why claiming that one of these frameworks or tool-kits is the best one at the expense of others is a very shaky position. However, with all the hype around the latest and greatest Deep Learning methods (and other A.I. based models used in Data Science), it's difficult to argue against this position. Also, with Statistics having such a good reputation in academia and proven applicability across different domains, it's also hard to argue that it's not as good a framework. This may be good in a way since it keeps us humble, but it may also obstruct progress. How can you have the nerve to put forward something new if it doesn't comply with what is considered "the best" or if it doesn't comply with the traditional approaches to data learning, such as Statistical Learning? I'm not claiming to have a solution to this conundrum, by the way, and perhaps it's not something that can be answered simply. However, this kind of riddles that plague the data science field are what can be good food for thought and bring about a sense of genuine wonder about the prospects and the future of data science. Maybe when someone asks us what the best framework of data science is it's better to say "I don't know" and consider using different ones in tandem, instead of flocking into this or the other group of people who have made up their minds about this, and who are unlikely to ever change it. After all, open-mindedness is something that never gets old, at least not in a truly scientific field. Being open-minded is a key trait of any scientist, since the beginning of Science. The scientific method is basically a practice that relies on open-mindedness, focusing on testing a hypothesis based on the evidence at hand. However, nowadays there is a trend towards a heretic behavior (in lack of a better word) when it comes to the science of data, as well as the application of A.I. in it. Open-mindedness is not just being open about the results of an experiment though. That’s easy. Being open to other people’s ideas and beliefs is also important. It’s easy to dismiss some people, especially those writing about this matter, even though they lack the training you may have on the field. Still, those people may have some interesting insights, which they often express in their articles. You don’t have to agree with them, in order to gain from this, expanding your perspective. However, dismissing an article because it makes use of this or the other term (which in your opinion is not that relevant to the topic they tackle) is closed-minded. That’s not to say that we should accept everything we read, however. Some of the material out there is of low informational value and can be biased towards this or the other technology, for various reasons. That’s normal since the field of data science (as well as A.I. to some extent) is closely linked to the business world and is influenced by the dynamics of the markets of tools and frameworks related to data analytics. So, what do we do about all this? For starters, we can read an article before we dismiss it as irrelevant or otherwise problematic. Also, if we don’t agree about something with the author, we can construct arguments against that point and express them without attacking the other person. There are people who are incredibly toxic to the field and pose a threat to the field, by propagating their erroneous beliefs, but fortunately, these are few. Also, they are probably beyond salvation, since they have too large a following to ever question their beliefs. Still, by going against their propaganda, we can still help the people who haven’t made up their minds yet on the topic. Perhaps that’s why the most important thing you can learn about data science and A.I. is to have a mindset that is congruent to your development as a professional, always maintaining an open mind. Just because there are fanatics in this field who are getting paid way more than they should and maintain a large following due to their charisma, it doesn’t mean that this is the best way to go. It’s not easy to be open-minded in a place where fanaticism thrives, but in the long run, it’s a viable strategy. After all, data science is here to stay, in one form or another, while the views on it that are now popular are bound to change. It's quite enjoyable and insightful to learn about A.I., particularly NLP, as well as other data science related topics. However, with so vast a knowledge-base related to this topic, it's equally easy to forget a lot of what you learn. Enter this quiz that can help you recap the most important points of Natural Language Processing in a fun way, without the stress the often accompanies quizzes in educational institutes, for example. This is an experimental kind of video so it may still need some refinement, something I'm going to look into in the near future. In the meantime, you can check out this quiz video on NLP here. Enjoy!

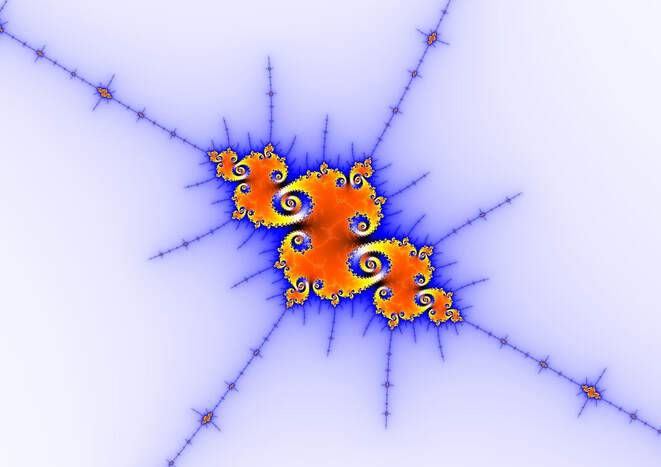

Note that this video is published on the Safari platform, O'Reilly's online library of sorts, where various publishers can exhibit their creations in digital format. In order to take full advantage of this, however, you'll need to have an account since it's a subscription-based site. It's funny how when you think you know something, you often discover that you don't know it that much. This is particularly the case in data science, a field that holds more mystery than most people think. For example, a great deal of heuristics and models are based on the idea of similarity and there have been developed several metrics to gauge the latter. Many of them are based on distances but others are more original, in various ways. During my exploration of the hidden aspects of data science (my favorite hobby), I came across the idea of a similarity metric that is not subject to dimensionality constraints, like all of the distance-based ones, while also fast and easy to calculate. Also, this is something original that I haven't encountered anywhere else and I've looked around quite a bit, especially when I was writing the book "Data Science Mindset, Methodologies and Misconceptions" where I talk about similarity metrics briefly. Anyway, I cannot explain it in detail here because this metric makes use of operators and heuristics that are themselves original, part of my new frameworks of data analytics. Let's just say that it makes use of Math in a way that seems familiar and comprehensible, but has not been used before. Also, it yields values in [0, 1], with 1 being completely similar and 0 being completely dissimilar. The idea is to find a way to gauge similarity from different perspectives and combine the result, something that would unfortunately only work if the data is properly normalized. Given that all conventional ways of normalizing data are inherently flawed, this metric is bound to work only in properly normalized data spaces. Because such spaces are more or less balanced (even if they have outliers), the average similarity of all the data points in them is always around 0.5 (neutral similarity), something that makes the metric very easy to interpret. As with other metrics and heuristics, it's not they themselves that are the most important thing, but the doors they open, revealing new possibilities (e.g. a new kind of discernibility metric). That's why I found the picture of the fractal above quite relevant since it is all about self-similarity, a concept that led us to the discovery of a new kind of Mathematics related to Chaos. Interestingly, even with such advanced knowledge, we are unable to fully comprehend the chaos that reigns modern A.I. systems, something that has its own set of problems. So, I ask you to wonder for a moment how much better A.I. would be if it were developed using comprehensible heuristics, making it transparent and interpretable. Perhaps its thinking patterns wouldn't be as dissimilar to ours and we wouldn't see it as much of a threat. |

Zacharias Voulgaris, PhDPassionate data scientist with a foxy approach to technology, particularly related to A.I. Archives

April 2024

Categories

All

|

RSS Feed

RSS Feed