|

Once you have acquired sufficient expertise in the field, you may wonder what the best option for your career would be. Do you take the safe path (i.e. a 9-5 job), the more adventurous route (i.e. a start-up, your own or someone else’s), or something else altogether (e.g. consulting)? Whatever the case, this is something to consider sooner or later and no book is bound to give you the answer you need (though there are good books out there offering general career advice for data scientists). The best career option for you greatly depends on your level of expertise and your ambition. If you have a lot of the former but limited supply of the latter, you’d probably opt for consulting as it is a career path that is highly competitive, so anyone who is merely competent is bound to struggle. That’s quite different though if you have a consulting company, which is more akin to entrepreneurship than anything else. The start-up option is more suitable for you if you are both an expert in your field and ambitious. The long hours, combined with the inherent uncertainty of the situation, make it highly unsuitable for people who just want to make a living. Besides, most data science start-ups try to break new ground, so it’s definitely not an option for the faint-hearted. If you in the beginning or the middle of your career and what you yearn for is a good benefits package, so that you can buy a house or something, then the 9-5 job option is the one for you. Who knows, if you are hard-working enough, you’ll get promoted at one point and be able to fetch a higher salary, one comparable with the money of the other career possibilities. Working in a large company is a somewhat different case, since such an environment tends to attract data scientists of high expertise, though their ambition may still be limited. Of course, an environment like this is extremely competitive and challenging in many ways, which is why after a few years you may decide to do one of the following: you may either leave and work in a smaller company so that you can attain a steady salary to pay your mortgage, or you may start your own company (something quite common among the more adventurous data scientists). Whatever you decide, it’s important to remember that there is no future-proof option out there. Even full-time employees in a company are bound to outlive their usefulness, in which case they may make you redundant or more you to a role that has little impact or prospects. Also, choosing a career path in data science that makes sense financially, may not be congruent to your growth as a data scientist. Because, contrary to what many people think, your professional prowess has little to do with the number of years in the field, or your familiarity with what’s popular in the market now. That may land you a 9-5 job, but that will not make you a better data scientist necessarily. This, however, is a topic for another blog post. Having been in pretty much every one of these work situations, I can say that at the end of the day what matters is what makes you happy. If you look at Mondays with dread, maybe it’s time to reconsider your career choices. If, you are like me and can’t wait for the work week to start, then you’re probably on the right track, career-wise. This doesn't mean that you should become complacent though. What’s good for you now, may not be optimal for you in a few years’ time, since new data will have presented itself.

3 Comments

I’ve talked about cyber security in the past and even described what a robust cyber security system would look like (i.e. the system I coined as Thunderstorm). However, I never really talked about how essential cyber security really is in a data science setting (not on this blog anyway). Is it really that necessary or just a nice-to-have? Clearly, a lot of work is done off-site nowadays when it come to data analytics on big data. This doesn't mean that data scientists always work remotely (though that would be quite feasible, if management would approve). Actually, a lot of the heavy-lifting is done on the cloud or on computer clusters that may not be where the data scientists work. So, in order for the transportation of data (both raw and processed) to be safe and private, a certain level of security is necessary. This usually takes the form of SSL, VPN, or other secure channels for transporting information efficiently and without much risk (there is always risk, especially with today’s black-hat hackers!). Of course, the need for cyber security increases when parts of the team need to exchange information, data, and programming code, related to the data science project at hand. These people may be in the same general location, but more often than not, their data exchanges take place over the Internet (e.g. over the company’s private Github account). Fortunately, most of these systems have security embedded in them, so cyber security in this case is not something we become aware of. Imagine how things would change though, if the embedded security protocol got broken, and you’d have to rely on a different avenue for exchanging all this sensitive information with your colleagues. Beyond these more or less apparent cases where cyber security is necessary, there are several others, more relevant to the data science tasks. For example, anonymization of data falls under the same umbrella, although it is quite different to the aforementioned cyber security processes. Naturally, the space of a blog article is insufficient for doing this topic justice. Suffice to say that cyber security may be ubiquitous, but it’s definitely not something to take for granted. If you could enrich your knowledge of this discipline, I’d urge you to do so. One way to manage that (or at least get started), is through my Ethics for Data Science video, as well as my latest book. Although many people nowadays are still skeptical about the prospects of Julia as a data science programming language, the Julia community doesn't seem to pay much attention to this viewpoint. In fact, as the community of Julia users grows and more and more people outside the US use it, its main conference, JuliaCon, is starting to adapt accordingly. This year, for the first time ever, it took place outside Boston, namely in San Francisco. This coming year, it is scheduled to take place in London, UK. Although JuliaCon has a strong presence in India too, with a version of having taken place in Bangalore, during the Autumn of 2015, JuliaCon has remained primarily an American event. Yet, this coming year it is going to take place in Europe for the first time, something that few people would have imagined a few years ago. If you think about it, however, it makes sense, since lots of European programmers and scientists have been using Julia for a while now. There is even a course on Julia programming from the university of Glasgow, by Dr. T. Papamarkou. This is a Master’s level course, by the way, so you can tell that they don’t take Julia lightly in the UK! It is also worth noting that the UK has a tradition in Julia, since the majority of the books published on the language, come from a British publisher. Of course, most of them fail to do justice to the language, which is constantly evolving, making it very elusive for anyone who wants to write a book about it (trust me, I have been there!). Yet, it goes to show that there is a lot of interest in learning and mastering it. This is quite remarkable, considering that Julia is an imported technology, while the programmers’ community in general tends to be quite conservative towards new languages. What does all this mean for data scientist? Well, a lot, if you think about it. Julia is becoming more of an international language, appealing to professionals around the world, while transcending cultural barriers. It’s no longer some fancy toy for tinkerers, nor some novelty for the coding enthusiasts. Even Google has recognized it as a viable alternative to Python, for their Deep Learning framework (TensorFlow), while other frameworks also include it as an alternative (e.g. MXNet). Moreover, although the majority of the talks in JuliaCon used to be about new packages of the language and the new features the Base package has, lately the focus has shifted since many people talk about its applications in scientific projects. Some of these projects are directly related to data science. Naturally, a single event is not going to change people’s minds about the language. People who are committed to using Python or R, will continue to use their tool of choice, no matter how much traction Julia gets. However, those open-minded enough to try out alternatives, now have no excuse for not giving Julia a shot. After all, this language was made to be congruent with other programming tools, including Python and R (as well as C and Java), so it’s not really a “this or the other” choice here. On the contrary, you can program in Julia and in other languages, as there are several bridge packages available for this purpose. That’s one of the many things you can discuss with other Julia users, if you decide to go to the Julia conference of 2018, in London. Nowadays there are many options out there for getting something in print. Some of them are for digital publications only, while others cover various media (including videos). As someone builds a reputation, more options become available to him, and the possibility of getting published in one of the more recognizable names becomes more tangible. After all, what better way to promote one’s work than an established channel, right? Well, things may be more complicated than they appear on the surface. The publication process involves four main stages, getting a contract going, producing the book, promoting the book, and then collecting royalties based on the sales. A bigger publisher is bound to be adept at the 3rd stage, since it has well-established channels for promoting its authors, but it can screw you over in the other 3 stages. A smaller publisher may require some help promoting your book, but it’s not anything excessive (as in the case of self-publishing). Let’s look at how the 3 stages where the big publishers fall behind are, in more detail. The first stage takes a while. While a small publisher may be fairly straight-forward about it, a larger publishing house may take its sweet time to settle on an agreement about a title. You are basically expected to do all the work for them, including market research, and prove to them beyond a shadow of doubt that the book you are going to author is going to be successful. When I was experimenting with a big publisher, this took about a month. The second stage is torture. A bigger publisher has a big reputation to uphold, so it’s not going to take any risks. Every milestone of the book-writing project is meticulously monitored. Regular meetings with a member of the production team are not uncommon. Although these may be helpful in some cases, the whole thing feels more like a full-time job. Eventually, you may come to see the whole project as a drag. Being with a small publisher doesn't have this much bureaucracy, while the project remains a creative endeavor to a large extent. Also, changes in the outline are permissible. The fourth stage is also a bit strange. Although I haven’t reached that stage with a big publisher, it is quite clear from the contract that the royalties are not going to be that much (a typical commission is about 12%), while the book may be bundled with other titles, for marketing purposes. Being with a smaller publisher usually guarantees a higher royalties percentage, while you may have a say on how the book is sold (you may even create events to promote and sell your book, with the publisher’s support). Also, a larger publisher may choose to discontinue the book production process at any time, effectively breaching your contract. Although you could theoretically take legal action against them, it is usually not worth the effort, since the costs involved make the whole process pointless. Besides, would you be willing to finish a project with a company that has openly tried to stop it? Smaller publishers are more honorable in that respect and develop wholesome relationships with their authors. Finally, with the data science field changing so rapidly, publishers may be quite cautious about what book-writing projects they undertake. So, a larger publisher is bound to go with the safer options, producing books that are on more or less mainstream topics. If you have a different topic in mind, or a different angle for a topic, then too bad. Smaller publishers are more willing to take risks in that respect. Of course, that’s not to say that all small publishers are great. There are small publishers that are a total waste of time. However, if you do your research, you can find a small publisher that makes sense for your book project. For me that publisher was (and still is) Technics Publications. What would yours be? So, my latest video is now available online at the Safari portal. I didn't post this yesterday, as I had already published an article for the blog. As I have been writing more articles that I can get published on DSP, I had to resort to this blog again. Also, I am not currently working on a book, so I have more time for writing for other channels (e.g. this blog, beBee, etc.). Anyway, if you have a subscription for Safari, check out my video. I’m certain it would be worth your time. As always, I’m open to feedback via the “contact” page of this blog. Being one of the first supporters of this programming language, at least for data science, it saddens me to talk to people in the tech industry and find out that they have never heard about this language. It’s even worse when this ignorance of it comes from people involved in data science and A.I., people who should have at least tried it. With Julia becoming more and more relevant among programmers (see the latest newsletter to get an idea), it seems like an oxymoron that it is still relatively obscure in people’s minds, particularly people involved in data science.

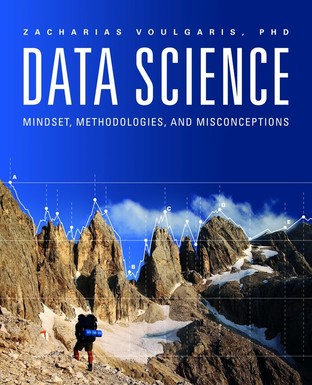

One reason why this happens is that the institutions that deliver data science know-how (I wouldn't call it education yet, since they don’t cover soft skills or the whole mindset aspect of the craft) are ignorant of Julia. This makes sense in a way since their all-knowing instructors are experts in the mainstream languages of data science, namely Python, Scala, and R. So, if the people you trust to teach you about data science are rookies in Julia, they’ll probably not even mention it, just like people in computer science don’t talk much about Quantum Computing, or security experts about how conventional security systems are sitting ducks when it comes to code-breaking that could be performed by QC systems. Another reason is that people learning data science are overwhelmed with the numerous technologies and tools of the field. As a result they take a pick on what to learn and they tend to gravitate towards the tools that have the most literature around them. Also, these tools tend to have been tried and tested the longest, so they are more risk-free. Since there are enough risks in getting into a new field, people tend to want to minimize additional ones if they can help it, so they give Julia a pass. Moreover, Julia has gained a lot of traction in academia, since many researchers are open-minded enough to give it a try, while they are also fed up with the (oftentimes proprietary) systems they use. Matlab may be great if someone else pays for the license to use it, but it’s doubtful that you’d pay for it yourself after your studies are over, especially if you end up with a measly salary as a junior data science researcher. Because of all this, Julia may have started to appear as an academic programming language to some people, something that is good for researchers but not for people in the real world. Of course, all these ideas about the Julia language are nothing but misconceptions about it. After all, it doesn't try to replace any other language, since it is highly compatible with many other programming languages, such as Python, R, C/C++, and even Java. So, if you feel like you have to choose between Julia and the language you are comfortable with, then probably you are gravely misinformed about the language. There is a reason why the company that develops it and supports it is doing so well. There is also a reason why many companies are using it (though they don’t always talk about it, for obvious reasons). So, if you still find Julia an obscure programming language for data science, you may want to divert your skepticism towards those who try to ignore it, for their own reasons. Maybe those people have formed views about it based on ignorance, rather than experience with it. Perhaps if you take the time to learn it and use it a bit, you’ll change your mind about it. Can Someone Develop the Data Science Mindset without Having to Be in the Field for Several Years?8/13/2017 Short answer: yes. Longer answer: definitely, as long as they make a conscious effort to cultivate the necessary parts of this mindset and integrate them into a functional whole. Easier said than done, right? Perhaps. Maybe that’s why some companies ask for someone that has 15+ years of experience in the field, even if the field didn't exist 15 years ago! What they may really be asking is for someone who knows what this field entails and knows how to make things happen, using the corresponding methodologies. So, the question that naturally arises is “how can someone get this understanding of the field without having to spend a large part of their career in it?” There are several strategies to accomplish that, none of which are easy or something that you can learn in a bootcamp. Even really good data science courses, may not be sufficient for this purpose. The reason is that the mindset of a data scientist is very diverse and not something you can put into a syllabus. There is a reason why the brightest data science practitioners seek a mentor, or some kind of personal learning experience, in order to gain some kind of mastering of the craft. Yet, as I’ve explained in the Mentoring in Data Science video, the mentor is not there to answer all your questions, even if he could answer most of them. The role of the mentor is to help you become your own mentor eventually. Of course there are exceptional people out there that don’t require a mentor, since they know everything they need to know, or they have the resources and resourcefulness to obtain this knowledge on their own. When I meet one such person I’ll be sure to blog about them! Apart from being part of a mentorship, you can learn about the mindset of the data scientist by practicing science, in a data analytics setting. This is quite different from taking this or the other tool, applying it, and then creating some insightful visuals from the results. Practicing science also involves conducting experiments, asking deep questions, and challenging yourself and what you know. It’s realizing that all scientific theories are disprovable and not taking anything as gospel, since you are secure in the knowledge that everything in science is in flux. The only thing that’s perhaps immune to this constant change, is the mindset, the essence of the role of the data scientist. One robust way to attain this understanding is to strip away all the transient aspects of the role, one by one, through scientific research. In other words, you need to become the craft, rather than merely practice it like a technician of sorts. In my latest book I underline several aspects of the data science craft that I’ve found, through both experience and research. They are relevant and useful for bringing about the data science mindset in someone. Of course, it is next to impossible to cover all the angles in a single book, but it is a good start. Applicable to all levels of data science practitioners, this book can at the very least make you fascinated about data science and motivate you to learn more about it, without getting consumed by the techniques or the aspects of it that are more in vogue these days (e.g. artificial intelligence). After all, just like everything else in science, data science is more of a process than anything else. It’s up to you to make it an insightful and intriguing one... A few years back, working remotely was a sci-fi idea, something people would look forward to in that futuristic society that they would one day inhabit. Over the years, due to certain technological advents, this remote possibility has turned into a tangible option for many jobs, include those related to data science. Working remotely is also something that many companies (especially start-ups) offer as part of their package deal to new hires. Because, who doesn't want to avoid the commute a few days a month and focus on what’s important in their work? Besides, unless you are a PM or something, you probably don’t need to be in the office all the time to attend meetings and other responsibilities that require your physical presence. In fact, with modern collaboration systems (e.g. Slack) and real-time communication platforms (e.g. Zoom), even meetings can take place virtually. So, what’s stopping people from embracing the remote work possibility full-time? Well, there are several factors, but they all boil down to two things: trust and efficiency. Most companies don’t trust their employees enough to grant them the remote working option for every day of the week. Also, the belief that people work better (more efficiently) when they are in physical proximity, is one that’s hard to shake from people’s minds. These ideas are valid to some extent, so before blaming the companies for not allowing you to do your data science work in the comfort of your home (or local coffee shop), better take a look at the other side of this partnership. Most information workers today may have the technical skills they need for their work but they may be lacking when it comes to other skills that are essential for being able to work remotely in an efficient manner. Namely, things like self-discipline, good communication, adaptability, are not that common as you would expect. Also, not everyone is able to organize his work when on his own. Still, there are plenty of people who have all these qualities (I've worked with quite a few myself), so they are not something unfathomable. If you think about it, every PhD student cultivates these skills during her project. Meetings with advisors / supervisors may take place in a physical location but most of the time you are on your own, often times during time periods when others are resting. So, being able to work remotely basically boils down to having a strong sense of responsibility and self-leadership. Having the option of working remotely in a data science setting makes sense for other reasons too. Typically, a data scientist liaises with a small number of people in the organization and unless they are new to the role, they know how to carry a conversation quite well and convey all the relevant information succinctly and effectively. Not every data scientist is an orator, but even the less social ones know how to communicate well, to various kinds of audiences. So, physical presence is not really a requirement for this. Also, with most of the data science work taking place in a remote location anyway (e.g. the cloud), a data scientist can manage even if he is at home, as long as he has a secure connection setup (e.g. a VPN). So, physical presence in the company is again more of an optional thing, rather than a necessity. Finally, day-to-day data science doesn't need a lot of resources, other than access to a computer cluster, usually in the cloud, and a fairly decent computer, so being inside a company building doesn't make things easier always. In fact, all the distractions and space limitations may make the whole matter more difficult for the employee, not to mention yield an additional cost for the company. Perhaps sharing the same physical location with your co-workers has its advantages that no VoIP system can offer. However, making physical presence a requirement, rather than just an option, is an antiquated practice that is bound to give way to more practical and more preferable possibilities in the future, such as full-time remote working. Over the years I have been a bit harsh on Statistics, esp. ever since I got exposed to the propaganda that Stats is the way to go in Data Science. This idea that statistical analyses would be able to help us tackle big data problems didn't (and still doesn't) make any sense, esp. if you have run experiments on various data analytics methods, statistical and otherwise, for several years. Although statistical methods have merit, they have been proven to be less effective or efficient as machine learning methods, esp. A.I. methods, like ANNs. Yet, statistics are still useful in some ways, still. Data analytics involves more than just building models. Before we reach that stage where we have a dataset that we are ready to use for predicting or analyzing something, to build a data product or derive some useful insights, we need to build that dataset. To do that, we often need to get our hands dirty by doing a lot of experiments with the data itself, using a variety of methods. Some of these methods derive from statistics. For example, we may need to explore the relationship between two variables (or over all the pairs of variables available). This is made possible with various methods, such as correlation and covariance. Even if these tools are suboptimal, they are a good starting point and in many cases, they may even suffice. Also, PCA and SVD remain very popular dimensionality reduction methods that are under the statistics umbrella. Another example where statistics come in handy is when you need to check the validity of a hypothesis. Although there are some simulation-based methods that can do that, statistics has a variety of tools that cover several possibilities of variables and their distributions, enabling us to test our hypotheses in a methodical and rigorous manner. Of course, we may still need to do some analysis beyond that, to establish the stability of our results, but there is no doubt that statistical tests can be useful as a first step. Finally, when it comes to sampling, statistics is usually our go-to framework. This set of simple techniques for obtaining a subset of a larger dataset may seem, well, simplistic, but it’s essential. After all, even the most sophisticated machine learning models are bound to fail (over-fit), if sampling isn't done right. There is a reason why statistics became a popular data analytics framework, and it’s quite likely that sampling played an important role in this (though I’ll need to run some tests to establish an exact measure of the likelihood!). So, even if A.I. and machine learning are the foxy way to go when it comes to data science, statistics have a place in the data scientist’s toolbox too. Plus, with so many people in data science focusing on the new and better tools that are in vogue these days, maybe a differentiator of a competent data scientist in the future will be how well she can handle statistical concepts and carry out basic tasks in a methodical manner. Besides, if it’s one thing that statistics can teach us it’s being methodical and scientific in how we conduct our analyses, qualities that are timeless in the data science field and foxy in their own way. I've talked about Spark in my books and I’d made the prediction that it was a very promising big data platform, back when it was still a novelty. With the enthusiastic support of DataBricks (the main contributor to Spark’s codebase) and a variety of data developers (data scientists who specialize in coding and in building data science tools), Spark became a force to be reckoned with. In fact, even people who were using Hadoop as their go-to big data framework, started to shift to Spark, while their relationship with Hadoop was reduced to using merely its file system. Yet, even with all these developments, there was something lacking in the Spark framework: integration capability with Julia, a new rising star in the programming world, and in data science lately.

I was one of the first data scientists who opens supported Julia (while other data scientists were choosing to play it safe and stick with what they knew). Even though I had acquired sufficient expertise in both R and Python to not need yet another programming language at my toolbox, I learned this new language, with whatever information I could dig up from the web (there were no good books on the language at the time). Julia’s advantages over other programming languages were too many and too powerful to ignore, so I learned it and started using it for my data science projects, in parallel with Python. In fact, one of the first Julia scripts I wrote aimed to help me organize my various Python scripts, so that I could easily pinpoint a particular function, from a bunch of .py files in a folder on my work computer. Yet, Julia was not widely adopted by the data science community and it’s quite likely that its lack of integration with Spark was one of the main causes of this. Lately, things have started to shift. A couple of people created a couple of Spark packages for Julia, the most active of which is Spark.jl. Although still in its development stage, the whole project seems promising, because it shows that it is possible to link Spark and Julia, even if the connection has to take place through Java (which is one of the languages Julia can bridge to, something unfathomable for other “promising” new languages, like Go). Naturally, Julia is a self-sufficient tool, so it does not need Spark or any other similar framework to be useful for data science. However, with many people following this or the other big data ecosystem with great zeal, it is unlikely that Julia will get the place it deserves in data science, without making it appealing to these people. Unmistakably, learning Julia for most data scientists is a walk in the park (it has elements of both Python and R, while Scala is very similar to Julia in terms of logic). Yet, knowing how to write a Julia script and actually using such a script in production, as part of a codebase built around Spark, that’s a somewhat different matter! Also, even though Spark doesn't need Julia either, it has a lot to gain by incorporating all the Julian data scientists, since there is no doubt that it’s easier to use Julia than it is to use Scala, when it comes to data science applications. Will Julia and Spark form a synergy that will transform data science for years to come? Possibly. How? Well, letting go of certain attachments to a certain programming paradigm would definitely help. After all, if you know how to program well, learning to use another programming language, even if it is from a different paradigm, is not that much of a challenge as some people think. Besides, isn't pushing the envelop in tech a big part of data science anyway? |

Zacharias Voulgaris, PhDPassionate data scientist with a foxy approach to technology, particularly related to A.I. Archives

April 2024

Categories

All

|

RSS Feed

RSS Feed