|

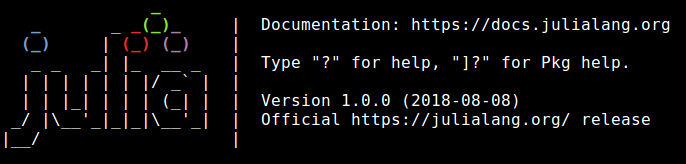

Introduction The previous week has been intense as I was working on a part of the proposal for a new project, attending a conference, and figuring out some things about my publication-related endeavors. With all that in mind, it was natural that I didn’t post anything on the blog, even though I wanted to. However, as my focus is always on quality, I didn’t want to just publish a rushed post or a simple announcement. That’s why I waited until now to get a new post out. The Event of the Decade On 8/8/18 the new release of Julia came out. This wasn’t just any release though, but the big one: 1.0. It is really hard to overestimate the importance of this release, even if the most conservative Julia users still feel that it would take a few months before the full force of v. 1.0 will reach the world. After all, just because Julia is now production ready, it doesn’t mean that everyone using it can benefit from this the same way, since the packages people depend on may take some time before they are fully compatible with the new release. Nevertheless, those who prefer to rely on our own code primarily can experience the benefits of Julia right now. Whatever the case, the fact is that Julia has now entered a new era, since it has proven itself to be robust and even faster than ever before. To give you an example of that, in the conference there was a talk about how Julia is applied in Robotics, via a specialized package some Robotics researcher developed recently. Even though this guy had worked with C++ before for the same project, he eventually shifted to Julia for the vast majority of the code, since it was good enough (i.e. sufficiently fast and reliable) to perform challenging optimization-related tasks in real-time. To be exact, the operations were 36% faster than real-time, enabling a robot operation frequency of 1000 Hz, at least in the simulations he was conducting. At the time of this writing, no other language has accomplished that, without having significant dependencies on C libraries. Ramification of Version 1.0 in Data Science and A.I. But how does all this affect us, as data science and A.I. professionals? Well, Julia isn’t evolving merely on the Base package or the fairly niche application of Robotics. In fact, there are now full-fledged packages that cover a variety of data science related applications, including deep learning models. In the conference there was a talk about the Knet package, for example, which is a deep learning package built entirely on Julia. Personally I don’t know any other deep learning tool that has been built entirely on a data science language (I don’t consider C++ to be such a language by the way, since data scientists tend to use high-level languages mainly). What’s more, this deep learning tool has comparative performance with other more established frameworks, while in one of the benchmarks it outperformed all of them. But data science is not just deep learning. There is a significant part of it that has to do with more conventional methods, mainly deriving from Statistics. What about Julia’s role in all that? Well, Julia has a number of fairly mature packages in Stats, including Bayesian Stats. What’s more, there is a new book being written right now on Stats with Julia, by a couple of academics who teach Stats in a university in Australia. So, it’s safe to say that Julia is pretty evolved in this aspect of data science too. More specialized parts of data science, such as Graph Analytics also have corresponding packages in Julia, while the LightGraphs package I talked about in my Julia for Data Science book, is still out there, now better than ever. Data engineering packages also exist, while there are several packages on optimization too, something data science can benefit from greatly, for the more challenging problems tackled. Now What? From all this, I believe it’s fair to say that the age-old argument that “Julia is not ready for DS / A.I. because x, y, z” is now as ridiculous as the belief that the number of available libraries is what makes a language more suitable for data science. Sure, packages can help, but it’s mostly due to their quality, not their quantity, while how fast a language runs is an important factor when analyzing the truckload of factors in a modern data model. That’s not to say that Python, Scala, and other data science languages are not useful any more, but ignoring the value of Julia in the data science / A.I. arena is silly and to some extent unprofessional.

0 Comments

Your comment will be posted after it is approved.

Leave a Reply. |

Zacharias Voulgaris, PhDPassionate data scientist with a foxy approach to technology, particularly related to A.I. Archives

April 2024

Categories

All

|

RSS Feed

RSS Feed